NotebookLM for

Research Papers

Transform any ML/AI paper into structured reviews, visual summaries, and actionable insights. Stop drowning in arxiv. Start understanding.

How It Works

Three steps. Complete understanding.

Paste a Link

Drop an arxiv or OpenReview URL. Or upload a PDF directly.

AI Analyzes

Multi-agent pipeline extracts, reviews, critiques, and refines.

Get Insights

Structured review + visual summary + saved to your library.

Built for ML Researchers

Every feature designed for how researchers actually work.

Structured Reviews

Get TL;DR, key contributions, technical details, and limitations — the format researchers actually need.

Deep Analysis

Multi-agent pipeline extracts, reviews, critiques, and refines. Thorough analysis, not shallow summaries.

Visual Summaries

AI-generated comic panels that capture key ideas. Perfect for presentations and sharing.

Paper Library

Build your personal knowledge base. Search, organize, and revisit papers you've analyzed.

Custom Criteria

Define your own review templates. Focus on what matters for your research area.

Deep Research

AI agents that go beyond single papers. Multi-paper synthesis and literature review.

Team Workspaces

Shared libraries for labs. Assign papers, discuss, build collective knowledge.

Paper Roast 🔥

Brutally honest critique mode. Exposes weaknesses, questions assumptions. Not for the faint-hearted.

???

Something special we're cooking up. Request early access to find out first.

See It In Action

Real output from our review pipeline

The authors introduce MOCO, a unified Python library that implements and benchmarks 26 different model collaboration algorithms. These span four levels of information exchange—API routing, text-based debate, logit fusion, and parameter merging—tested across 25 datasets.

- → First rigorous comparative framework for model collaboration methods

- → Quantifies "collaborative emergence" — systems solve 18.5% of problems impossible for any individual model

- → Weight-level collaboration achieves best results (60.1 avg vs 53.5 global avg)

Weight-level and logit-level methods require shared architecture/vocabulary. Cannot collaborate across heterogeneous APIs (GPT-4 + Claude). Multi-turn debate latency remains a production bottleneck.

Full review at ArXivIQ →

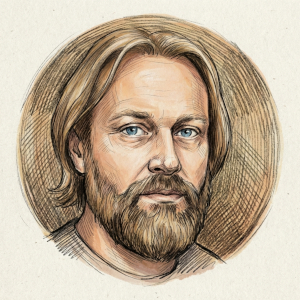

Built by Grigory Sapunov

PhD in AI • Google Developer Expert in ML • CTO at Intento • Author of "Deep Learning with JAX" (Manning)

"I've been reading ML papers for 20 years. In 2024, I admitted defeat — I couldn't keep up anymore. So I built an AI system to help. Now I'm turning it into a tool for everyone."

Ready to read smarter?

Join the waitlist. Be first to try PaperLM when we launch.